Introduction: The Limits of Traditional Computing

For over half a century, the digital world has been dominated by the von Neumann architecture, a fundamental computing model where the central processing unit (CPU) and the memory are physically separate, requiring data to constantly shuttle back and forth between them across a narrow digital bus.

This ceaseless back-and-forth movement, often referred to as the “von Neumann bottleneck,” generates tremendous heat and consumes vast amounts of energy, particularly when dealing with the massive, unstructured datasets and parallel computations inherent in modern Artificial Intelligence (AI) tasks like deep learning and real-time sensor processing.

Traditional silicon chips, regardless of how many cores they possess or how fast their clock speeds, are fundamentally optimized for sequential, logical operations, making them notoriously inefficient when confronted with the massively parallel, probabilistic, and low-precision computations that characterize the human brain’s neural activity.

As the demand for sophisticated AI—from autonomous vehicles needing instant decision-making to massive data centers running large language models—continues its exponential climb, the electrical power and cooling requirements of these systems are quickly becoming physically and financially unsustainable, signaling a critical need for an entirely new approach to processor design.

The visionary concept of neuromorphic computing emerges from this necessity, proposing a radical shift away from the linear, energy-hungry digital model toward a computational architecture that directly mimics the incredibly efficient, parallel, and event-driven processing of biological neural tissue.

Pillar 1: Understanding the Biological Brain Model

Neuromorphic computing is fundamentally an attempt to capture and replicate the elegant efficiency and unique operational mechanisms of the human brain’s structure and function.

A. Neurons and Synapses

The brain processes information using interconnected neurons, forming the basic architecture that engineers seek to emulate.

- Neurons as Processors: In the biological brain, neurons are the fundamental processing units, acting as tiny, complex integrators of incoming electrical and chemical signals from countless other neurons. They do not have clock speeds in the digital sense.

- Synapses as Memory and Weights: The connections between neurons are called synapses. These are not just simple wires; they are dynamic, variable junctions whose strength can change over time based on activity, effectively serving as both the memory storage and the weighted parameter of the system.

- Massive Connectivity: The human brain is characterized by its massive interconnectivity, featuring an estimated 86 billion neurons linked by trillions of synapses, allowing for parallel processing on a scale unattainable by traditional chip designs.

B. The Spiking Mechanism

Biological neurons communicate using discrete, energy-efficient electrical pulses, a critical difference from continuous digital signaling.

- Event-Driven Communication: Neurons communicate using brief, asynchronous electrical pulses known as spikesor action potentials. These pulses are sent only when a neuron’s accumulated input reaches a certain voltage threshold.

- Energy Efficiency: Because neurons only activate and send a signal when necessary (event-driven), the brain consumes an astonishingly low amount of power—about 20 watts—for tasks that require supercomputers using megawatts of power.

- Temporal Coding: The information in the biological brain is often encoded not just in the rate of the spikes (how many per second) but also in the timing (when they occur relative to other spikes), a concept known as temporal coding.

C. Plasticity and Learning

The brain’s ability to constantly rewire itself in response to experience is the ultimate goal of any truly intelligent processor architecture.

- Synaptic Plasticity: The efficiency and learning capability of the brain stem from synaptic plasticity, the phenomenon where the strength of synaptic connections changes dynamically based on the frequency and timing of pre- and post-synaptic activity.

- Hebbian Learning: A key rule is Hebbian learning, often summarized as “neurons that fire together, wire together.” This principle guides how connections strengthen when two neurons are activated simultaneously, forming associations and memories.

- Online Learning: The brain constantly learns and adapts in real-time while operating, unlike deep learning models that typically require distinct, resource-intensive offline training periods followed by static deployment.

Pillar 2: The Neuromorphic Architecture

Neuromorphic chips fundamentally restructure the relationship between processing and memory to overcome the limits of the von Neumann bottleneck.

A. Integrated Processing and Memory

The core architectural change is the physical integration of memory storage directly with the processing units (cores).

- In-Memory Computing: Neuromorphic chips aim for in-memory computing or compute-in-memory. The memory circuits are placed directly alongside, or even within, the processing elements that mimic the neurons.

- Eliminating the Bottleneck: By minimizing the need to move data over long distances across the chip, this integrated structure drastically reduces the latency and energy consumption associated with the von Neumann bottleneck, especially for highly parallel tasks.

- Massively Parallel Cores: Instead of a few powerful CPU cores, neuromorphic processors contain thousands or even millions of tiny, interconnected “neurosynaptic cores,” each containing its own local memory array representing the synapses.

B. Spiking Neural Networks (SNNs)

The software layer of neuromorphic computing relies on a new type of artificial neural network designed to operate with discrete, timed pulses.

- Discrete Events: Spiking Neural Networks (SNNs) process information using discrete events (spikes) rather than the continuous, floating-point values used by traditional Artificial Neural Networks (ANNs).

- Biological Realism: SNNs are considered biologically more realistic than ANNs. They operate asynchronously and only consume power when a neuron actually fires a spike, leading to the dramatic energy efficiency gains.

- Rate and Timing: Information is represented in the SNN by the firing rate of the neuron (frequency of spikes) or, more powerfully, by the precise timing of those spikes relative to the input and other neurons in the network.

C. Hardware Implementation: Synaptic Devices

To physically create the dynamic, variable memory of a biological synapse, engineers are developing entirely new types of microelectronic components.

- Memristors: A leading candidate device is the memristor (memory-resistor). This two-terminal component remembers the amount of electric charge that has passed through it, allowing its resistance to represent the weight of a synaptic connection dynamically.

- Phase-Change Memory (PCM): Another approach uses phase-change memory, which can store data by changing the physical state (amorphous or crystalline) of a material, providing a non-volatile, stable, and analog weight storage mechanism.

- Analog Nature: These synaptic devices are often designed to operate in an analog fashion, using continuous resistance or conductance values to store weights, rather than the discrete binary (0 or 1) values of traditional digital memory cells.

Pillar 3: Key Advantages Over Conventional AI

The architectural shift to neuromorphic design delivers unique benefits that directly address the most pressing limitations of current AI hardware.

A. Extreme Energy Efficiency

This is arguably the most compelling advantage, enabling complex AI tasks on devices with severely limited power budgets.

- Sparsity and Asynchronicity: Because SNNs operate on sparse, event-driven data (only a few neurons spike at any moment) and function asynchronously (without a central clock), they avoid the constant power drain of traditional chips running continuous data streams.

- Orders of Magnitude Savings: Neuromorphic chips have demonstrated the ability to process complex sensor data—such as image recognition or auditory processing—at power consumption levels that are orders of magnitude lower than equivalent tasks run on GPUs or even specialized AI accelerators.

- Edge AI Empowerment: This efficiency is crucial for Edge AI applications (AI running locally on the device). Imagine complex real-time video analysis on a battery-powered security camera or drone that must run for days without recharging.

B. Real-Time, Low-Latency Processing

The structure allows for decisions to be made almost instantaneously, crucial for interaction with the real world.

- Minimal Data Movement: The compute-in-memory architecture removes the time delay (latency) associated with moving data between separate memory banks and the processing unit, resulting in much faster response times.

- Event-Driven Speed: Since processing is triggered immediately by incoming data (spikes), the chip can respond to external sensory events—like a sudden change in a camera feed—without waiting for a clock cycle or batch process to complete.

- Applications: This low-latency capability is essential for safety-critical systems, including autonomous driving(where a fraction of a second delay is catastrophic) and high-speed robotic control.

C. Sensor and Data Integration

Neuromorphic systems are uniquely suited to interface directly with novel, brain-inspired sensors, creating a seamless processing pipeline.

- Dynamic Vision Sensors (DVS): Also known as event cameras, these sensors mimic the human retina by only recording and transmitting data (spikes) when a pixel detects a change in light intensity.

- Seamless Integration: Neuromorphic processors are designed to naturally consume the event-driven output from DVS cameras without needing a resource-intensive intermediate data conversion stage, creating a highly efficient perception system.

- Auditory Processing: Similarly, event-driven silicon cochleas (electronic inner ear models) can output spike streams that are directly processed by the neuromorphic chip, ideal for low-power voice activation and continuous acoustic monitoring.

Pillar 4: Current Challenges and Research Frontiers

Despite the profound potential, neuromorphic computing faces significant hurdles in both hardware engineering and algorithmic development before it can achieve widespread commercialization.

A. Algorithmic and Training Difficulty

The software required to program these chips is fundamentally different and often much harder to develop than that for traditional GPUs.

- New Learning Rules: Standard deep learning algorithms (like backpropagation) are optimized for continuous ANNs. SNNs require new, biologically inspired learning rules such as Spike-Timing-Dependent Plasticity (STDP) for effective training.

- Training Instability: Training SNNs is often less stable and more computationally complex than training ANNs because the discrete nature of the spikes makes gradient calculation difficult and noisy.

- Tooling and Ecosystem: The development ecosystem—compilers, libraries, and frameworks—for SNNs and neuromorphic hardware is still nascent and highly fragmented compared to the mature software stacks available for GPU-based deep learning.

B. Hardware Scalability and Manufacturing

Creating billions of reliable, variable synaptic components presents massive challenges to current microchip fabrication techniques.

- Analog Variability: Synaptic devices like memristors often exhibit analog variability and drift over time and temperature, making it difficult to maintain precise, stable synaptic weights required for reliable computation.

- Reliability and Yield: Manufacturing billions of these novel, non-traditional components on a single chip with high yield and reliability remains a significant barrier to cost-effective mass production and deployment.

- Hybrid Approach: Many current chips employ a hybrid approach, using standard digital CMOS technology to emulate the SNNs, which is easier to manufacture but loses some of the pure analog energy efficiency benefits.

C. Benchmarking and Comparison

It remains difficult to directly compare the performance and efficiency of neuromorphic systems against established benchmarks.

- Non-Standard Metrics: Traditional metrics like Floating-Point Operations Per Second (FLOPS) are irrelevant for neuromorphic chips, which use non-standard metrics like Synaptic Operations Per Second (SOPS) or Spikes Per Second (SPS).

- Task Difference: Neuromorphic chips currently excel at specific, constrained tasks (like pattern detection and acoustic classification), but their performance on complex, general-purpose tasks like training large language models is still unproven or computationally impractical.

- Ecosystem Lock-in: Current commercial neuromorphic chips often require specialized software stacks and are tied to a specific manufacturer’s hardware, creating ecosystem lock-in and hindering open research and standardization.

Pillar 5: Leading Commercial and Research Efforts

Major technology firms and research institutes are making significant investments in the development and commercialization of powerful, next-generation neuromorphic hardware platforms.

A. Intel’s Loihi Platform

Intel has released multiple generations of its Loihi research processor, focusing on practical research and application development.

- Architecture: Loihi features a fully asynchronous, digital neuromorphic core architecture designed to implement SNNs, integrating memory and compute directly onto the chip surface.

- Efficiency Focus: Intel has demonstrated Loihi’s ability to perform real-time gesture recognition and seismic event detection with 1,000 times lower energy consumption compared to conventional processors.

- Developer Access: The platform is accessible to researchers globally through Intel’s Neuromorphic Research Community, providing a crucial environment for developing SNN algorithms and use cases across various scientific domains.

B. IBM’s TrueNorth Chip

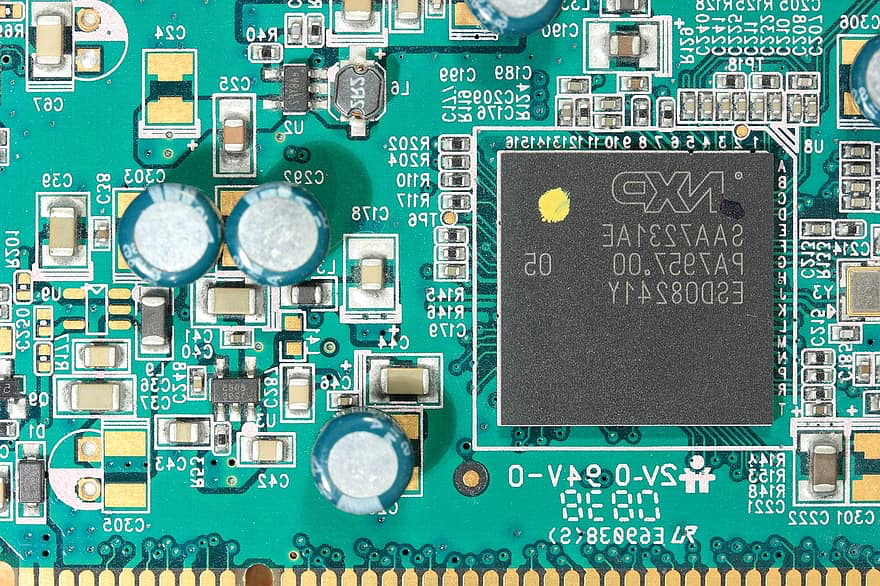

One of the pioneering and highly scalable neuromorphic chip designs, providing an early benchmark for massive-scale integration.

- Fixed Architecture: TrueNorth was designed with a fixed, highly parallel architecture featuring 1 million digital neurons and 256 million programmable synapses, prioritizing massive scalability and low power consumption over algorithmic flexibility.

- Application: It was demonstrated to be highly effective at real-time sensory processing tasks like pattern recognition and classification, operating at ultra-low power levels ideal for mobile and embedded applications.

- Digital Implementation: TrueNorth primarily uses standard digital CMOS technology to emulate the SNNs, demonstrating that massive parallelization can be achieved even without relying on novel, analog synaptic devices.

C. European Initiatives and Academic Research

Academic groups and European research consortia are focusing heavily on the theoretical and materials science aspects of neuromorphic computing.

- Material Science: Much of the cutting-edge research focuses on developing the next generation of analog synaptic devices (like memristors) with better stability, faster switching times, and lower energy consumption characteristics.

- Analog vs. Digital: Research continues into the optimal balance between analog implementation (which offers the greatest potential for power efficiency) and digital emulation (which offers greater stability and ease of manufacturing).

- The Human Brain Project (HBP): Large-scale collaborative efforts like the HBP seek to develop comprehensive software and hardware platforms to simulate and understand the brain, with neuromorphic technology as a key beneficiary.

Pillar 6: Future Implications and Transformative Applications

The successful deployment of neuromorphic processors promises to unlock levels of AI capability and efficiency that were previously considered impossible.

A. Ubiquitous and Personalized AI

Neuromorphic chips will enable advanced intelligence to be everywhere, embedded into the environment and personal devices we use every day.

- Smart Sensors: Every smart sensor—be it in a building, a piece of industrial machinery, or a forest monitoring system—could contain a neuromorphic chip to process data locally and intelligently, communicating only the most vital information.

- Hearing Aids and Prosthetics: The low power, real-time processing capability makes these chips ideal for medical devices like advanced hearing aids that can instantly filter noise or intelligent prosthetics that adapt to muscle signals immediately.

- Personalized Learning: Future devices could use neuromorphic processing to execute continuous, personalized learning right on the device, adapting their behavior to the individual user without sending private data to the cloud.

B. Scalable Data Center Acceleration

Even the largest AI data centers can benefit immensely from the unique strengths of neuromorphic hardware for specific computational bottlenecks.

- Pre-Processing Filters: Neuromorphic processors can serve as ultra-efficient pre-processing filters for massive unstructured data streams (e.g., millions of daily security camera feeds), classifying and routing only the relevant events to power-hungry GPU clusters for deeper analysis.

- Efficient Inference: While training large LLMs remains a challenge, running the inference (deployment) phase of these models on dedicated, highly optimized neuromorphic hardware promises immense gains in speed and efficiency once the algorithms mature.

- Bio-Inspired Optimization: The core principles of brain sparsity and event-driven computation could inspire new optimization techniques even for traditional digital hardware, guiding the design of more efficient data movement and memory access patterns.

C. Next-Generation Robotics and Autonomy

Neuromorphic technology is a critical enabler for true autonomy, allowing robots to perceive and interact with the world like living organisms.

- Real-Time Perception: Robots using DVS cameras and neuromorphic processors could achieve sub-millisecond perception and response times to sudden changes in their environment, allowing for smoother, safer, and faster movement.

- Integrated Control: The chips can efficiently run motor control loops and sensor fusion algorithms in parallel, leading to more flexible and robust robotic control systems that mimic the seamless sensory-motor integration of animals.

- Unsupervised Learning: The future lies in SNNs that can perform significant amounts of unsupervised or reinforcement learning on the fly, allowing a robot to rapidly adapt its skills and behaviors to entirely new, unmapped environments after initial deployment.

Conclusion: The Final Frontier of Computing

Neuromorphic computing represents a radical and necessary divergence from the traditional, power-hungry von Neumann architecture.

This paradigm shift is driven by the goal of mimicking the extreme energy efficiency and massive parallelism of the biological brain’s spiking neural operation.

The core technological advance is the physical integration of memory and processing, effectively eliminating the limiting energy consumption and latency of the von Neumann bottleneck.

Spiking Neural Networks (SNNs) are the new algorithmic model, utilizing discrete, asynchronous events (spikes) instead of continuous numerical values to communicate information.

This architecture delivers orders of magnitude greater power efficiency than conventional processors, making it uniquely suited for Edge AI and battery-powered applications.

Despite challenges in algorithmic stability and the manufacturing of reliable analog synaptic devices, research efforts led by Intel and IBM continue to drive rapid hardware innovation.

The successful deployment of this technology promises to enable truly ubiquitous, real-time, and ultra-low-power artificial intelligence across autonomous systems, robotics, and smart sensors worldwide.